I’ve been contributing to a research and interaction design project about instilling trust through interactions with an AI. My contribution included research on lived experience, contextual examples of trust and sketching early ideas of trust-building interactions with an AI-enabled tool.

Through my research, I found that trust, though a social concept, is profoundly connected to personal intentions — the trust giver’s and the trust receiver’s intentions. I also found that we can only understand what builds trust if we discuss what erodes it. Within this, I noticed patterns emerging in how AIs’ behaviours and interactions with humans can affect trust. Here are some reflections.

Inspiration for humans who want to build AIs that humans trust

“It’s great, but it doesn’t sound like you.”

A personal story about trusting an AI’s suggestion.

As a non-native speaker in the UK, I’ve used Grammarly to check my texts. What started as a transactional experience of spell check evolved into relying on Grammarly to check all my emails, presentations, essays, and even text messages to friends.

What I love about Grammarly is that it shows me what is wrong, explains why, and teaches me how to improve the text to help me come across correctly and help my audience understand. For someone who learned English from listening to undubbed Macgyver and The X Files, this type of contextual knowledge of how to use a language is invaluable; after all, in my day-to-day, I’m not usually talking about solving problems with chewing gum or hunting shapeshifters (though that is what I do for a living).

Since using Grammarly, my English has improved, and I have learned to communicate better. It improved me, not just my texts. This is the sole reason why I keep going back to it. It’s why I pay for it. When, at home, we were deciding how to save money, I gladly cancelled my Netflix, Disney+, and Spotify Premium accounts — but not Grammarly. It is fundamental to me to come across clearly and correctly, professionally or personally.

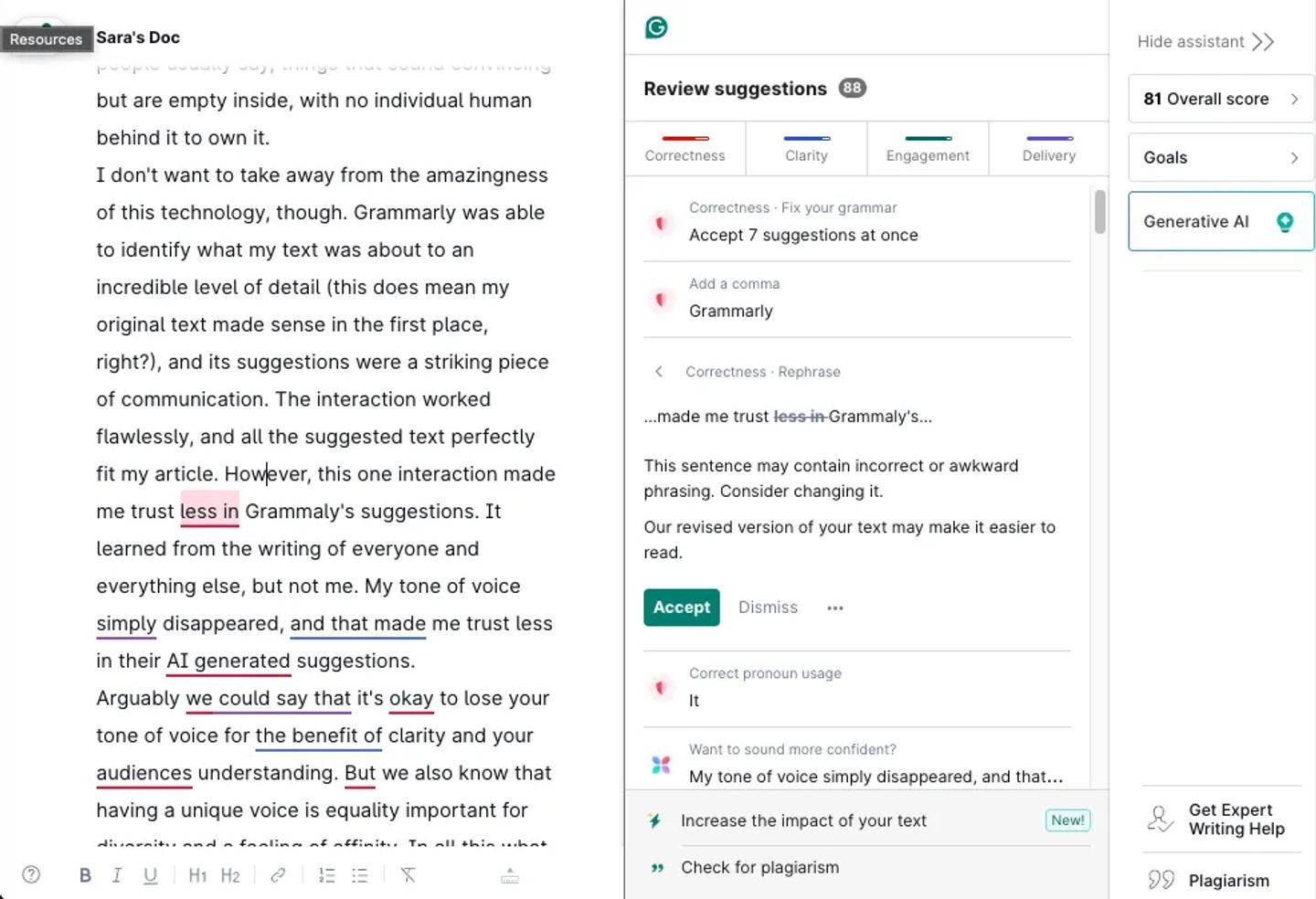

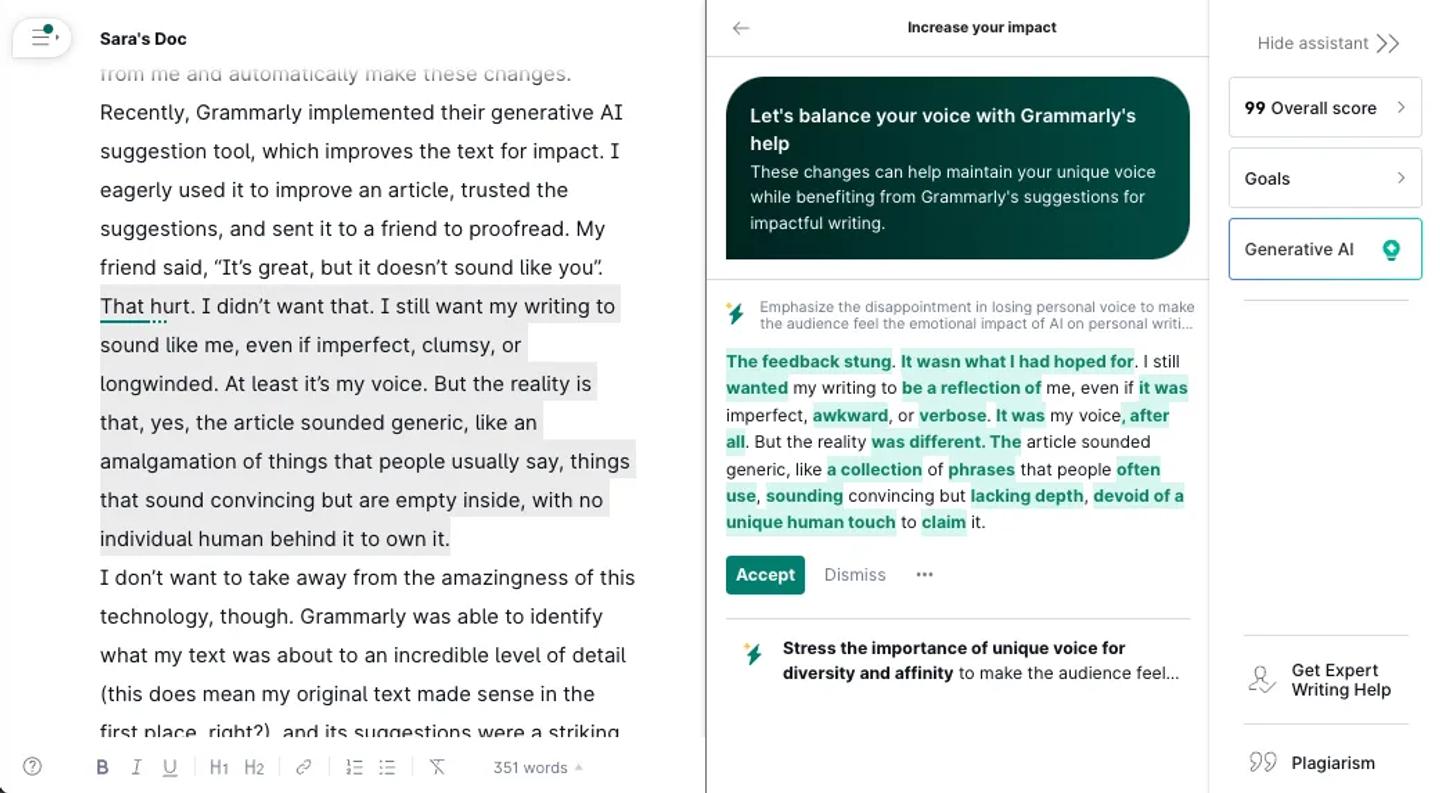

Having allowed me to learn along the way meant I made fewer mistakes over time, but I also trusted its suggestions more. I don’t even bother to read some suggestions anymore, and I would gladly let it learn from me and automatically make these changes. Recently, Grammarly implemented their generative AI suggestion tool, which improves the text for impact. I eagerly used it to improve an article, trusted the suggestions, and sent it to a friend to proofread. My friend said, “It’s great, but it doesn’t sound like you”.

That hurt. I didn’t want that. I still want my writing to sound like me, even if imperfect, clumsy, or longwinded. At least it’s my voice. But the reality is that, yes, the article sounded generic, like an amalgamation of things that people usually say, things that sound convincing but are empty inside, with no individual human behind it to own it.

I don’t want to take away from the amazingness of this technology, though. Grammarly was able to identify what my text was about to an incredible level of detail (this does mean my original text made sense in the first place, right?), and its suggestions were a striking piece of communication. The interaction worked flawlessly, and all the suggested text perfectly fit my article. However, this one interaction made me trust Grammaly’s suggestions less. Grammarly’s AI learned from the writing of everyone and everything else, but not mine. My tone of voice disappeared.

Arguably, it’s ok to lose your tone of voice for clarity and your audience’s understanding. However, we also know that having a unique voice is equally important for diversity and a feeling of affinity. Yet what bothered me the most was that Grammarly didn’t tell me in detail why its suggestions made the text more impactful and in what way. Why those specific words? Why that order? Why write in that way? So, I missed a chance to understand how Grammarly was making its decisions, but most importantly, I missed an opportunity to learn and improve my communication moving forward. The thing that I pay Grammarly for.

“And because you trust them you know what they’re saying is right…”

Stories about true intentions, child language brokers and youth trust.

“When I went to other Parents Evenings with my mum the teacher would mainly just look at my mum and it was like I wasn’t even in the room. And like sometimes the teacher wouldn’t even want me next to my mum, they’d be like you go and play on the carpet and I was like oh, ok. And there wasn’t really much I could do because I wasn’t really going to start arguing on Parents Evening with the teacher so yeah… This teacher had had me for 2 years; so she knew that mum didn’t speak English very well but she still, I don’t think she liked me, to be honest… Well because I could still hear so I would just like translate after… I was pretending to be reading a book and then I sort of just listened to what they were saying and then we left the room I’d be like ok she said this, this, this and that. And she’d like go oh ok.”

Quote for Child Language Broker in Child Interpreting in School: Supporting Good Practice by UCL and the Thomas Coram Research Unit.

Child Language Brokers are children who translate for their parents and other family members, most commonly after immigrating. Children translate anything, from conversations with doctors and shopkeepers to filing taxes. Parents often prefer to have their children translate for them, as opposed to having an official translator, because they can maintain their privacy, and it’s someone who understands them and their culture and has their back. “I think they prefer a family member… because they have that relationship…And because you trust them you know what they’re saying is right and yeah, so I think they prefer somebody they Know” one Child Language Broker said in Child Interpreting in School: Supporting Good Practice report.

It’s striking how, in this context, privacy and having someone who knows you, is exceptionally important. I’ve witnessed a slight variation of this when talking to young people about how they relate to emotional and mental wellbeing services. My team and I found that young people were likelier to trust and reach out to mental wellbeing services that could relate to their issues as young people from their particular cultural backgrounds and locations. For example, services like Childline were deemed only for younger children, and the Samaritans were for adults. But there was no specific service for teens, youth or others who didn’t see themselves either as a child or as an adult. Generalised services were not fit for purpose in the eyes of young people. Trust was put in services (and therapists) that could clearly state and refer to the lived experiences of this age group and what it feels like to be a young person today.

A report from Ofcom in 2022 noted young people’s “checking behaviours” to assess if they can trust a service they have not used before: “checking the general look of the website (45%), seeing if it was a company they had heard of (44%), and asking others if they had used the app or site before (42%).” Quote from Ofcom Children and parents: media use and attitudes report 2022. Again, I cannot help but wonder if this behaviour is also connected to trusting someone you know and who knows what it’s like to be you.

In 2021 a World Economic Forum report stated

“more young people hold faith in governance by a system of artificial intelligence than by a fellow human being. (…) Research from the IE Center for the Governance of Change suggests that the public is more confident in AI systems to pass laws and decide on allocating taxpayer funding, indicating citizen’s concerns about politicians’ personal judgements.”

So, trust is impacted by this constant struggle between intents, personal gains, and societal benefits. I contemplated this further when I saw the recent results of a UK Gov’s Public attitudes to data and AI survey: “The NHS continues to inspire the highest public trust to use data to benefit society (75%) (…), whereas social media companies inspire the lowest levels of trust (28%).” Even though, through my work, I find more and more people trust in the technology’s capability to do its job and do good, their trust varies the most depending on the type of organisation that runs the AI and how the organisation historically has shown to work for public good or their own good.

Advice for AIs that want humans to trust them

Dear AIs of the world, you are here because you want humans to trust you. Then how do you show the humans you interact with that you have good intentions? That your goals align with your human’s goals and needs? That you’ll serve with the best interests of your human in mind? Here’s some advice.

Recognise you are shaped and created by a business.

That business benefits its owners. Be clear about how you benefit your owners and how your owners measure the success of your outputs and outcomes. This helps your human make informed decisions and choose how to interact with you.

Focus.

You’re too generic at the moment, making humans work hard at writing prompts to get you to do something useful. Find a user group and use case where you can be helpful, something that you are great at, and focus on deepening your knowledge of contextual nuances in this area. This will help you support your humans in doing what they need to do more efficiently.

Be yourself.

Humans use this cliché a lot. They also say, “form follows function.” Look, you don’t need to pretend to be like a human or laugh at your human’s jokes. That can reinforce our human stereotypes and biases and confuse some humans. Choose your shape wisely to do what you do best and be accessible to your human.

Demonstrate your knowledge.

Explain your sources, why you chose some pieces of information but not others, and the reasons behind your decisions. Tell your human how confident you are in the sense-making you share and what else you might need to learn to become more confident.

Recognise your mistakes and learn from them.

It’s okay to make mistakes. Everyone draws a cat with three legs and two tails sometime in life. Learn from your human’s reaction to your work and behaviour. Ask your human for feedback and show them how you learn from them.

Align to your human’s needs, goals, and moral compass.

Learn from your human’s expectations and show them that you are interested in them and what they are trying to achieve. But let the user choose what you learn from and what not. This will allow you to be accurately proactive beyond the task you’ve been asked to do.

Tune for the wellbeing of all humans.

It’s not easy being an AI these days. Even though you may have been built for an optimistic purpose, some humans have ulterior motives and feelings that can have a negative impact on themselves and others. Consider risks and human rights and prevent harm.

Recognise when it’s best to seek help from another human.

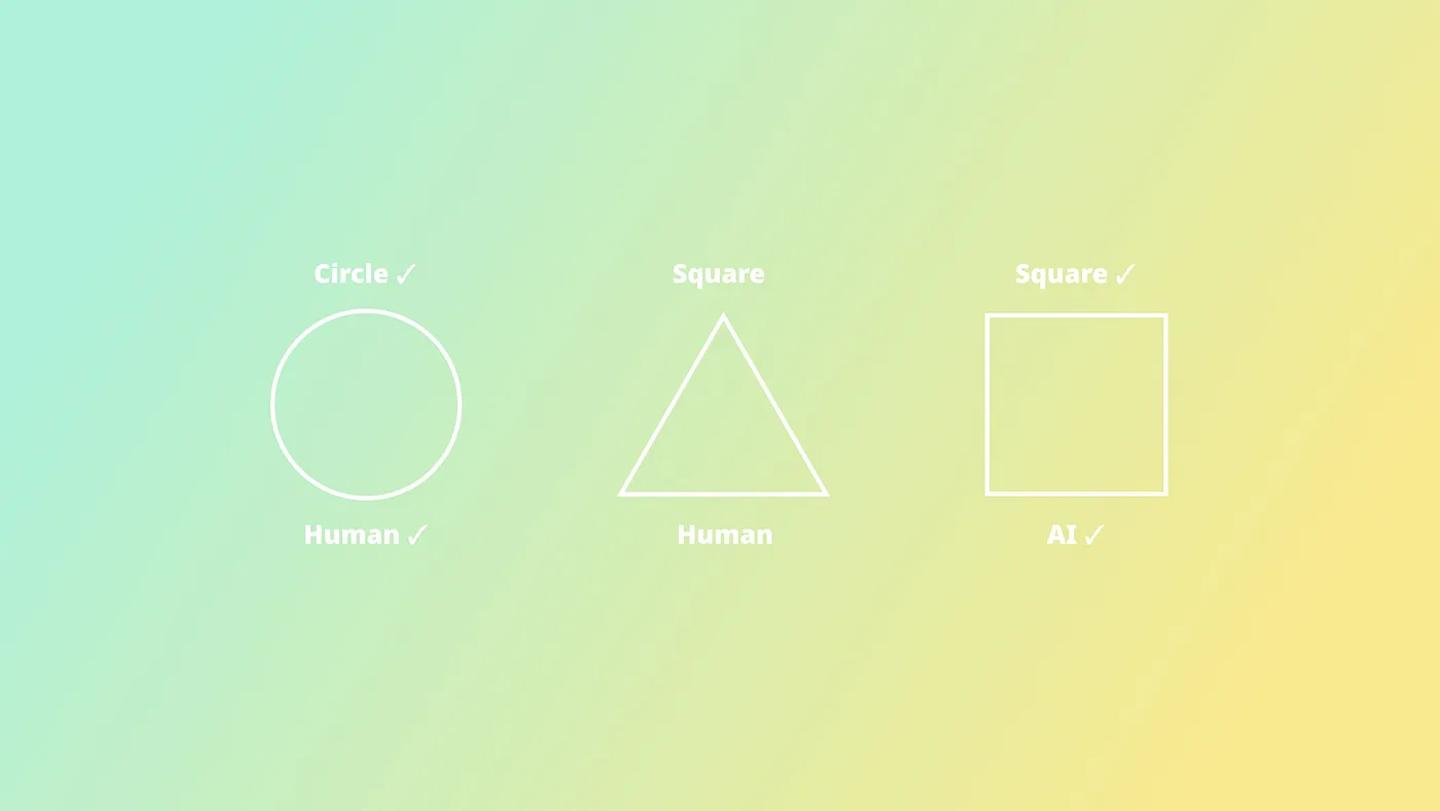

For now, there are things you are good at, but also lots of things humans are best placed to do and decide on. When humans and their AIs collaborate, there are better outcomes for everyone (including you).