Over two years ago, I embarked on a journey to understand the impacts of different social and technological contexts and upbringings, and explore ways to implement these learnings in my practice, using emerging technology to design equitable, kind and purposeful digital products and services with children and young people.

Today, I’m in the final stages of my MA in Sociology of Childhood and Children’s Rights at UCL, and I’m starting a piece of research to understand care-experienced young people’s first-hand experiences, perspectives and hopes in relation to the use of AI in the UK’s social care. I intend to use this project to augment young people's voices and inspire intentional action from the privileged position of designing how people interact with these technologies.

I first became interested in the use of AI in social care when I watched The Trials of Gabriel Fernandez on Netflix. This docuseries follows the events that led to Gabriel's death and the involvement of US child services. It also presents the Allegheny Family Screening Tool as a potential solution to prevent cases like this by using predictive risk modelling to assess a family’s data from diverse public services.

“There is a broad body of literature that would suggest that humans are not particularly good crystal balls. Instead, what we are saying is, let’s train an algorithm to identify which of those children fit a profile where the long-arc risk would suggest future system involvement.“ Emily Putman-Hornstein, Director of Children's Data Network, in The Trials of Gabriel Fernandez

So, I started looking more deeply into AI use in the UK’s social care and decided to focus my dissertation on this topic. Here is an overview of:

- Why did I choose this research topic?

- What technologies are starting to be used today in the UK’s Social Care?

- What are the research focus and activities?

- What are the working contexts and broad theoretical perspectives for research?

If you are interested in this project or would like to share your thoughts on using AI in the UK’s social care, please contact me at sara.salsinha.22 [at] ucl.ac.uk.

Why did I choose this research topic?

As a designer of digital products, I focus on early-stage projects that explore complex problem areas and emerging technologies. My work includes prototyping and testing digital services using AI for children, where I observed numerous challenges and conflicting attitudes that hinder thoughtful innovations due to a struggle to see children as agents and decision-makers, even though arising from good intentions:

- Children are often excluded from technology development due to perceptions that they are solely vulnerable and need protection, that they are hard to engage in research and do not need to be involved in relation to non-child-like topics.

- There are plenty of ethical debates about the use of AI. However, discussions about AI's benefits are primarily centred on making work faster, and there are still only limited explicit considerations over the quality of outcomes and diverse human needs.

- My work in the public sector triggered my curiosity about the implications of using AI in statutory public services, especially for vulnerable citizens. An informal conversation with a social worker further influenced my focus on social care. They mentioned that if asked, children in care would always prefer to return to their families, even if having been neglected or abused, suggesting that their preferences might not always reflect what is best for them.

These perspectives were troubling because children, like adults, have the right to make sense of and act in the world equitably. This conviction has drawn me to similar thinking within the sociology of childhood and children's rights. Undertaking this research under the context of my dissertation for an MA in Sociology of Childhood and Children’s Rights forms a connection with UCL and childhood studies, which will greatly impact the project's ethical considerations and theoretical frameworks used to make sense of findings. After completing my dissertation, I aim to use the findings to design improvements in AI-enabled tools and workflows in social care, informed by the perspectives and experiences of care-experienced young people.

What technologies are starting to be used today in the UK’s Social Care?

I will focus on tools that help social workers write case assessments and transcribe sessions, for example:

- Magic Notes: view the description on the UK gov website. Magic Notes is described as being created specifically for social care and designed by care experts.

- Microsoft Co-Pilot: view the description on the UK gov website and capability adoption service. Microsoft Co-Pilot is a more general-purpose AI integrated into a wider suite of Microsoft tools, as is described as a companion “to inform, entertain, and inspire” and allows users to “get advice, feedback, and straightforward answers.”

It will be interesting to understand the similarities, opportunities and risks between built-for-purpose and general-purpose AI tools.

What are the research focus and activities?

Project’s work in progress

I’m keeping track of my progress on a Notion page with a running summary of this project’s activities. Its process, progress, findings, and conclusions will be made public and updated weekly.

Focus

Care-experienced young people’s first-hand experiences, perspectives and hopes in relation to the use of AI in the UK’s social care.

Questions

- How are AI tools used within a children’s social worker workflow, e.g. to transcribe sessions and write case assessments?

- How is the use of AI communicated to young people in care, and what related information and actions are available to them, e.g. privacy policy?

- What is the aim of using this technology, and how does it impact young people?

- What change do care-experienced young people hope for in AI use in social care?

Key Activities [May 2025]

- Remote individual sessions and contributions of 13-17 years old, care-experienced young people in the UK;

- Remote individual sessions with UK children’s social workers who transcribe sessions and write case assessments with the help of AI;

- Interview with an AI (LLM) and exploration of use cases of Microsoft Co-Pilot, Magic Notes, and similar tools.

This project requires approval from UCL Research Ethics and the researcher’s DBS check [February/March 2025].

What are the working contexts and broad theoretical perspectives for research?

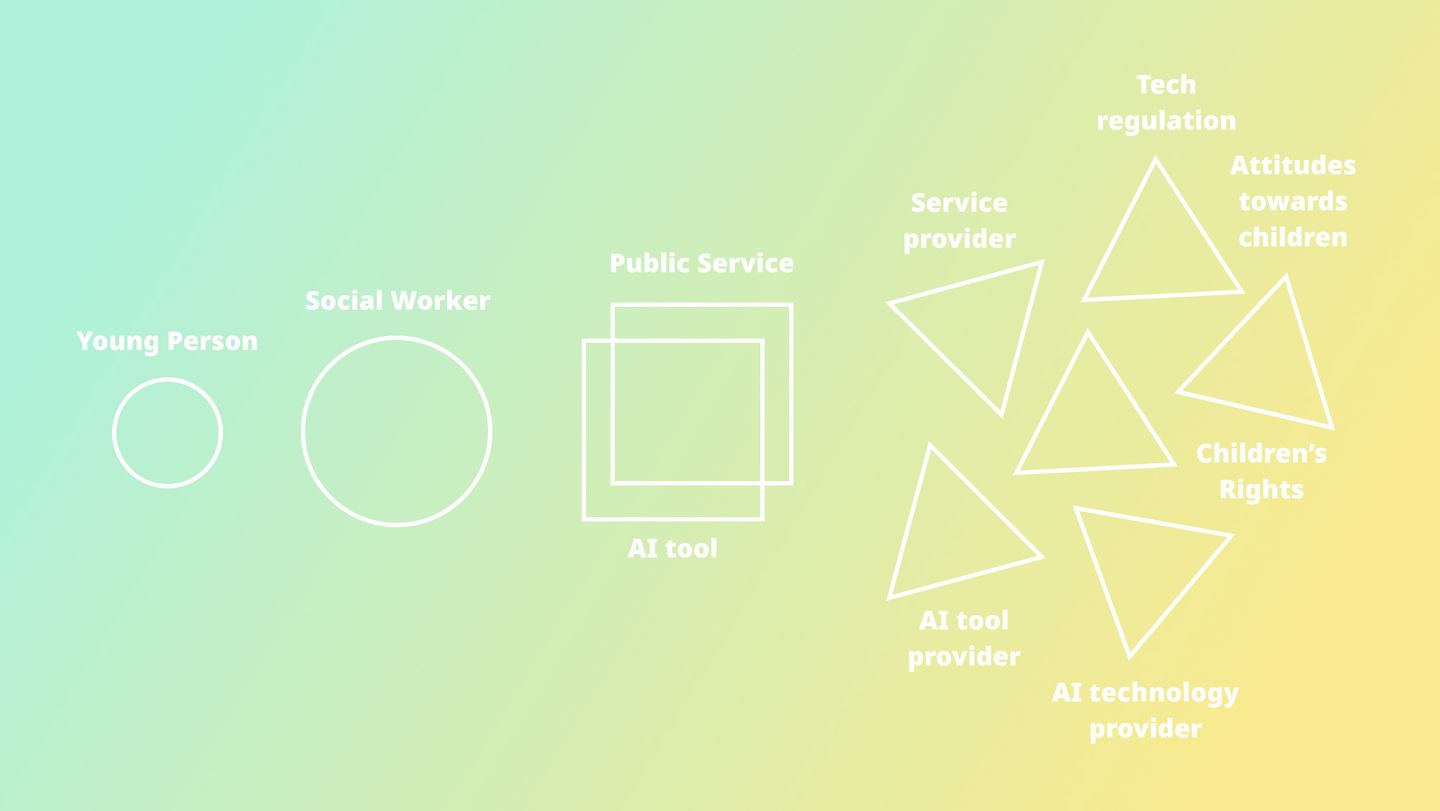

My research will be framed by tensions arising from perceptions of children, children's rights, government policies, and the ethical implications of technology deployed at scale.

Historically, children were seen as 'human becomings' reliant on adults for learning, often viewed solely from a developmental perspective (Tisdall, 2023). The 'new sociology of childhood' shifted this view, suggesting children are rightful social agents with the ability to impact their world (Uprichard, 2010).

The UNCRC is often quoted as establishing children's rights for protection and participation and prompted many countries to translate children's rights into regulation. The right to "have their views considered and taken seriously" (UNICEF) has predominantly resulted in the formation of official participation spaces like children's councils, often facilitated by adults (Kallio & Hakli, 2011). Additionally, the importance of social research as evidence in policy-making has been recognized as essential for governments to justify public spending priorities (Alasuutari et al., 2008).

However, the extent to which children are heard, what topics they are invited to contribute to (Nolas, 2015) and their implications on children's lives depend on adults' ideals of childhood (Spyrou, 2018) and can be based on assumptions about children's capabilities (Stoilova et al., 2020).

Children's competence is a central debate in childhood studies (Hanson, 2012), where matters of consent and agency over their lives rely on "what it takes to be a vulnerable or a competent child" (Drotner, 2009). In relation to technology, the UNCRC's General Comment 25 views emerging technologies as a risk to children but also acknowledges "their gradual acquisition of competencies, understanding and agency" (CRC, 2021).

The UK's Information Commissioner's Office (ICO) highlights that children are "less aware of the risks, consequences and safeguards concerned and their rights in relation to the processing of personal data" (ICO) and encourages children to act "on their own behalf as long as they are competent to do so" (ICO) and their 'best interests' safeguarded. However, competence is difficult to define and assess, and there is no clear correlation between age and competence (Hanson, 2012), although legally, people aged 13 and above can consent to their personal data processing (ICO).

The use of AI in social care, particularly regarding children in care, raises further crucial questions about personal data. In 2020, 'What Works for Children's Social Care' (WWCSC) found that predictive risk technology based on social workers' reports and assessments data failed to identify "four out of every five children at risk" and that there was a "low level of acceptance of the use of these techniques in children's social care amongst social workers" (WWCSC, 2020).

Just 5 years later, the adoption of AI in society has increased exponentially, with 36% of the UK's population using Generative AI (Deloitte, 2024) and 28 councils in England testing AI for case note writing in social services (Koutsounia, 2024). Despite its growing use, AI in public services faces scrutiny over biases, 'augmenting inequality', and inaccuracies, unable to cater to individuals' unique needs and contexts (Eubanks, 2018; WWCSC, 2020; Duranton, 2020). While its more practical limitations have been relatively researched, AI's effects on those making critical decisions are largely unexplored. For instance, in the US, a screening tool designed to identify children at risk led parents to avoid essential public services out of fear of being flagged, particularly affecting low-income families who lack alternatives. On the other hand, social workers felt compelled to make comparative judgements with the risk level they thought the tool would provide (Eubanks, 2018).

Industry experts note that many people trust intelligent machines more than other individuals (Duranton, 2020). In the context of data privacy, it has been noted that "more young people hold faith in governance by a system of artificial intelligence than by a fellow human being" (WEF, 2021). Governments are increasingly adopting "digital-by-default" policies, aiming to make digital services more efficient and cost-effective (Stoilova et al., 2020; GDS). The WWCSC study mentioned was motivated by the significant number of children in vulnerable situations compared to those receiving social care (WWCSC, 2020). While AI adoption aims to enhance service efficiency to reach more citizens, it raises ethical and better quality outcome considerations for children who, though vulnerable, are rights holders and can make decisions about their personal data (Koutsounia, 2024).

References

- Foundations of Childhood Studies in Critical Childhood Studies: Global Perspectives, Tisdall, K. (2023)

- Questioning research with children: discrepancy between theory and practice? Uprichard, E. (2010)

- A summary of the UN convention of the rights of the child, UNICEF

- Are there politics in childhood? Kallio, K. P. & Hakli, J. (2011)

- Social Research in Changing Social Conditions. Alasuutari, P., Bickman, L., & Brannen, J. (2008)

- Children's Participation, Childhood Publics and Social Change: A Review. Nolas, S.-M. (2015)

- Disclosing Childhoods. Spyrou, S. (2018)

- Digital by Default: Children’s Capacity to Understand and Manage Online Data and Privacy. Stoilova, M., Livingstone, S., & Nandagiri, R. (2020)

- Schools of thought in children’s rights in Children’s rights from below. Hanson, K. (2012)

- Children and Digital Media: Online, On Site, On the Go in The Palgrave Handbook of Childhood Studies. Drotner, K. (2009)

- General comment No. 25 (2021) on children’s rights in relation to the digital environment, CRC, Committee on the Rights of the Child (2021)

- What should our general approach to processing children’s personal data be? ICO, Information Comissioner’s Office

- What rights do children have? ICO, Information Comissioner’s Office

- What are the rules about an ISS and consent? ICO, Information Comissioner’s Office

- Machine Learning in Children’s Services, WWCSC, What Works for Children’s Social Care. (2020)

- Over 18 million people in the UK have now used Generative AI, Deloitte. (2024)

- AI could be time-saving for social workers but needs regulation, say sector bodies in CommunityCare, Koutsounia, A. (2024)

- Automating Inequality: How High-Tech Tools Profile, Police and Punish the Poor, Eubanks, V. (2018)

- How humans and AI can work together to create better businesses | Sylvain Duranton, Duranton, S. [TED]. (2020)

- Davos Lab: Youth Recovery Plan, WEF, World Economic Forum. (2021)

- About the Government Digital Service, GDS