This is part of a series exploring care-experienced young people's perspectives on AI in UK children's social care. Read the introduction and overview here.

This article examines how AI is increasingly used to document children's lives in care—and what care-experienced young people fear about being "reduced to AI summaries." In this article, you'll explore:

- Why stories matter — a care-experienced young person's testimony reveals what's at stake when we discuss AI in care records

- How children's stories are documented and heard in care — How children are written about in records and why relationships with social workers matter

- AI as a storyteller — How AI tools are being used to transcribe and write assessments

- What care-experienced young people and social workers told me — Six key findings from my research on AI's potential and risks

- What this means for AI product development and social care practitioners — Practical steps to resist reduction and expand understanding

Why stories matter: Lamar's testimony

In February 2025, Lamar, a 19-year-old care-experienced young person, testified before the House of Commons Education Committee. During their years in care, Lamar struggled to maintain relationships with siblings, community, and local areas due to frequent placement changes. Calling for change, Lamar highlights that they cannot remember most of their childhood, and rewrites their story, reminding themselves of "doing well for my age".

I open this article with Lamar's testimony because it reveals what's at stake when we discuss AI in children's social care. Ownership of story and identity is critical for children in care, with research showing that when care-experienced individuals later access their care records, it can support trauma healing, identity building, and memory recovery. Lamar's inability to remember their childhood—despite having care records documenting it—highlights fundamental questions: whose voices shape these records, how are children represented, and with AI increasingly used to document cases, what are the implications for how children's stories are told?

How children's stories are documented and heard in care

Just-a-child: unheard and filtered voices

How we think of children fundamentally shapes how we relate to them. As childhood studies scholar Rachel Rosen describes, dominant constructions depict childhood as "a time of happy innocence, but also an essentially vulnerable phase of life that required special protections", alongside perceptions of children as "wild or antisocial, requiring the firm regulation of an adult". These views profoundly influence 'adult-child power relations' within children's social care.

Research analysing case record practices reveals practitioners describe children through adult lenses, with assumptions often taking precedence over authentic representation. Practitioners dismiss children's voices—researchers documented them saying 'they're just children'—and limit involvement to child-appropriate areas. Children often lack unique identities in reports, becoming indistinguishable from siblings or portrayed solely through vulnerability.

Care records document family history, education, health, and views, serving dual purposes: evidencing decisions while 'constructing a social reality' about families. Research shows written evaluations often position children as objects of inspection rather than co-creators of their stories, missing children's own words and containing gaps that distort experiences.

Even when children are formally included, social workers have significant editorial discretion over how children's voices are interpreted. Roy and colleagues found in a 2025 study that 60% of children weren't consulted about discharge procedures, and only 1/3 participated in court proceedings. Children's words are consistently filtered through professional interpretation, raising concerns about accuracy and reproducing power imbalances. Misinterpretations negatively impact trust in social workers.

Why relationships with social workers matter for feeling heard

The Munro Review of Child Protection (2011) highlighted that trusting relationships with practitioners are central to enabling children's participation—a finding consistently replicated in subsequent research. Munro found that social workers felt unprepared to communicate with children and were constrained by administrative tasks. Over a decade later, the MacAlister Review (2022) and the House of Commons Education Committee (2025) continue to document these same challenges.

Frequent changes in social workers due to high staff turnover and heavy workloads create infrequent, inconsistent encounters, significantly undermining relationship-building and contributing to young people's lack of trust. Children engage more meaningfully when they have stable, consistent relationships with professionals who demonstrate genuine care and interest.

Studies spanning over a decade show children identify listening capacity as key to 'good' social workers: stopping when children speak, being present rather than distracted by paperwork, and showing genuine interest rather than assuming they know better. These relationships operate within what sociologist Berry Mayall calls generational order—systematic power structures where adults maintain authority over children. While complete equality remains unrealistic, reducing unnecessary inequalities becomes essential for children's participation.

AI as a storyteller

Increasingly, social workers use AI tools like Magic Notes and Microsoft Copilot to transcribe meetings, summarise, and write assessments using Large Language Models (LLMs). LLMs "predict and generate plausible language" based on calculating the probability of words appearing next to each other. They can misuse words and reproduce embedded biases around race, gender, and religion, whilst being particularly prone to what AI researchers call 'hallucination' and 'sycophancy'—producing unfounded information and over-agreeing to avoid conflict.

These tools have demonstrated practical benefits—social workers report that automated transcribing allows them to focus more fully on conversations with children and families rather than note-taking. However, these technologies cannot recognise the non-verbal communication that practitioners are encouraged to interpret when working with children. If AI learns predominantly from historical social care records, it risks perpetuating the filtered, adult representations of children, where structured and probabilistic ways of organising text may overshadow complex narratives and individuality.

What care-experienced young people and social workers told me

Participants revealed that care records and the way children's stories are captured profoundly shape young people's experiences of care through what gets written—and how it gets written.

Manual note-taking creates inaccuracy

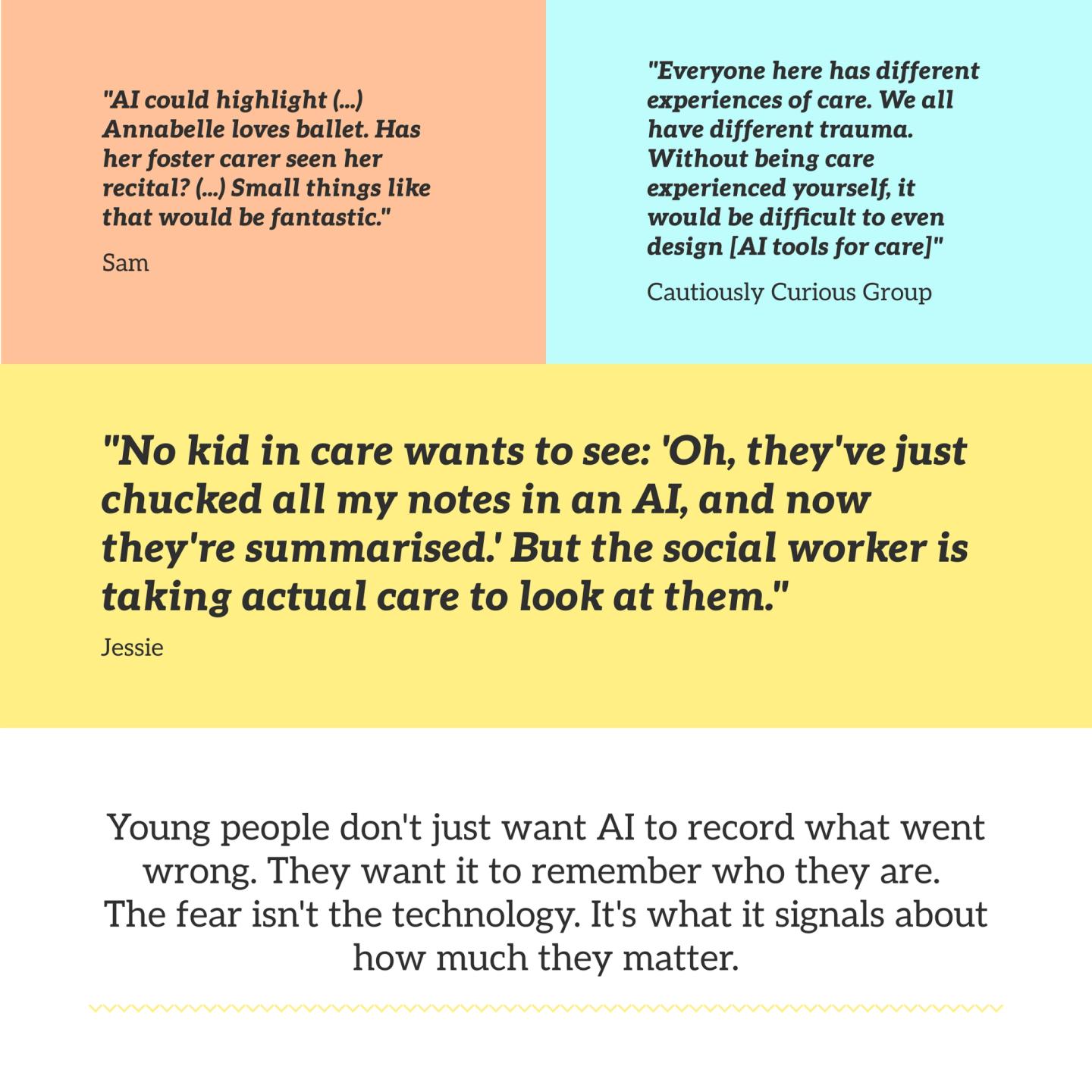

Sam described how time-constrained meetings led to vague notes with "really egregious issues sometimes from the really inaccurate note-taking." Information gets missed, misheard, or recorded without the nuance that shapes its meaning. All participants valued AI's ability to record precisely what was said—"help make sure that you get every detail" [Jessie], with potential to "stop fights or arguments with young people" [Cautiously Curious]. Sam warned against AI "chang[ing] the meaning inadvertently."

However, all participants recognised that AI makes mistakes—transcription errors, hallucinations, and misconstrued summaries, especially in the types of unstructured, natural conversations that emerge from direct work with families. The Sceptically Suspicious worried this "won't really save time in the long run."

The 'bad' things written about young people affect their care

Care-experienced participants described discovering awful things in their records only after leaving care—information they didn't have an opportunity to see or correct whilst it was actively shaping how they were treated. Sam explained a friend's particularly damaging experience—foster carers' complaints were recorded as fact, leading to progressively worse placements as each new carer read the file before meeting them.

The Independent SW immediately identified how AI could disadvantage Black families: "who trains the AI around cultural nuance?" They gave examples of culturally respectful forms of address being misinterpreted as inaccurate names. Sam saw AI's potential to be "less biased than a human being" because it "seems more mathematical", but worried it could give higher risk scores to marginalised communities with more involvement with services. The Independent SW worried: "My understanding is that AI learns as it goes along and we're learning then from a flawed system."

Social workers emphasised they don't want to rely solely on others' interpretations in care records. The Independent SW explained: "I would still want to ascertain information myself (...) because what one person describes as aggressive, I might not"—emphasising that "things are relationship dependent."

AI presence may affect how people feel and open up

The Safeguarding SW worried they'd become "conscious about what I say, because I know I'm being recorded" and might focus on assessment needs rather than relationship building. They shared that not all conversations would be appropriate to record "verbatim", especially when people are visibly distraught or during first meetings.

Care-experienced participants worried about feeling processed rather than cared for. Jessie explained: "the moment you start kind of introducing things that are almost going to help the social worker do their job you kind of might introduce a feeling of 'Oh I'm just their job to my social worker, I'm not actually being cared about.'"

Information in care records can be overwhelming and disconnected

Social workers explained having to manually piece together chronologies across multiple systems. The Independent SW was blunt: "What more likely happens in a lot of cases is we don't search for information because we don't have time for that. So we base our judgments and recommendations on the limited information that we do have easy access to."

All participants saw potential in AI creating more complete pictures. Sam highlighted the lack of multi-agency working in their own care—police were involved from age 8, but social workers seemed to have "no clue" when they finally entered care at 15. As the Independent SW explained: "if I want to know a list of all the episodes where alcohol's been involved, and what was the impact of those episodes, and AI then gives me that back, that information was already in existence in the child's file anyway, it's just saved me from hours of trawling entries to find it."

AI can generate richer records—yet records are not the whole person

Jessie and Sam were clear: AI can generate richer case records with authentic children's views, but records are not the whole person. Jessie wanted AI to capture "everything" for complete understanding, but recognised it "is not going to understand like dreams, aspirations, goals (...) whereas a human will."

Even with diverse professionals making notes—police, medical, care—they're patchy fragments in relation to the child's involvement with services. "They don't know that child. None of them do", as Sam explained, "when you're in care, you're not really seen as a young person with passions and dreams. You're seen as a case."

All participants supported AI for admin work—automated transcripts, initial summaries, and documentation. However, there was strong consensus that humans are essential because situations constantly change and context matters. Jessie worried AIs would hallucinate around human topics, whilst "humans understand humans" and didn't want "computers assessing how at risk a child is" because that can be construed as fact. Focus group participants emphasised AI won't pick up on body language or tone and "doesn't know what's actually going on".

The fear: being reduced to an AI summary, like they've been reduced to notes

"I don't think children should be reduced to AI summaries. (...) I don't think even humans can really ever do that fully." [Sam]

One Cautiously Curious participant emphasised: "everyone here has different experiences of care... we all have different trauma. (...) without being care experienced yourself, it would be difficult to even design" an AI that captures this complexity.

Focus group participants agreed wholeheartedly that summaries constructed for specific purposes mean "what you lose in that process is more than what you would gain" [Cautiously Curious]—the humane sense-making is lost. Additionally, Sam worried Policy Buddy creating guides for young people, would be "impersonal", missing the translation between policy written "for adults" and real lived struggles. They contrasted this with a charity that hired care-experienced people to create resources: "[they] could write and create with the nuance of knowing what could go wrong."

What this means

Care-experienced participants and social workers shared remarkably similar concerns: both worry about accuracy, lost nuance, and AI summaries replacing genuine understanding. Young people specifically fear intensifying what they've already experienced—being reduced to incomplete, judgmental case notes.

These findings align with research describing case records as 'time-capsules' where children are primarily objects of inspection, only sometimes co-creators of their stories. My research reveals care-experienced young people are acutely aware of how they've been written about and its consequences.

The findings caution against viewing AI solely as a means to increase accuracy. Whilst exact transcripts capture what was said, they don't capture why, the context, or the non-verbal communication that shapes meaning. The Safeguarding SW's concern about becoming self-conscious reveals how recording technology may change the quality of conversation—a shift from relationship-building to assessment-gathering that young people already fear.

In summary making and text writing, AI makes decisions about what to include, interpret, and emphasise. Starting from AI-generated summaries risks limiting the exploratory thinking and holistic understanding participants identified as essential. Young people know the difference between being documented and being known.

What this means for AI product development and care practitioners

The challenge isn't to make AI transcribe perfectly or summarise efficiently. The challenge is to resist AI's inherent tendency toward reduction and instead design systems that expand practitioners' capacity to see children as whole people.

For AI designers and developers:

- Build features that surface complexity, not just summaries — Instead of condensing to a single narrative, highlight contradictions and missing information. When children's views conflict with professional assessments, flag it. When wishes aren't recorded or strengths aren't documented, make that visible. The goal is to expand understanding, not provide quick answers.

- Make bias visible and questionable — Flag emotionally charged language in care records in real-time. Prompt reflection: "This uses 'aggressive' or 'difficult'—is this observation or interpretation? What might this look like from the child's perspective?" Build in friction requiring practitioners to actively choose subjective language rather than defaulting to it. This matters because AI trained on historical records risks amplifying existing patterns of deficit-focused, adult-filtered documentation.

- Enable verification, not just generation — Give young people access to AI-generated transcripts and summaries before they're finalised. Let them flag inaccuracies, add context, or dispute interpretations. This is about co-creating the narrative that will shape their care—not just producing cleaner documentation.

For children's social care practitioners:

- Treat AI outputs as starting points for exploration — When AI surfaces information from records, use it to generate questions rather than conclusions. If AI flags repeated conflict in a child's history, ask: what's underlying this? What support might help? What is this child trying to tell us? Actively document strengths, interests, and what makes this child laugh—not just the crises that brought them into care.

- Use AI to create space for relationship-building—and be aware of what changes — If AI handles administrative tasks, redirect that time to unhurried conversations and genuine presence. But be alert to how recording technology may change the quality of the conversation itself. The Safeguarding SW's concern about becoming "conscious about what I say, because I know I'm being recorded" matters. The goal is to be more present, not to perform for a transcript.

- Question bias in documentation, including your own — When reviewing AI-generated summaries or drafting records, ask: am I describing behaviour or interpretation? What might this look like from the child's perspective? Resist the pull toward deficit-focused records that children will one day read and that will shape every future placement and professional relationship.

- Explain AI use honestly — Tell young people when AI is being used in their meetings, how it works, and what they can do if uncomfortable. Transparency about technological processes is part of treating young people with dignity—not an administrative formality.

For local authorities and policymakers:

- Measure quality of care, not just documentation efficiency — Don't assess AI success solely through time saved on recording. Ask: do young people feel heard? Do they see their wishes reflected in plans? Do they have stable relationships with practitioners who know them as whole people? The detailed exploration of these questions is taken up in AI within children's social care systemic constraints—from 'case' to care.

- Build mechanisms for young people to access and shape their records — Create systems where children can review what is written about them, flag inaccuracies, and add their own perspectives. Give young people the same access to AI tools that social workers use to navigate their records and understand their rights. This isn't about creating extra processes—it's about treating young people as co-creators of their own stories, not objects of inspection. The full case for young people's direct AI access is made in AI within children's social care systemic constraints—from 'case' to care.

AI in children's social care must help practitioners see children as whole people with complex lives, evolving identities, and futures beyond case files. The technology should never reduce children to summaries—it should expand understanding of their full humanity. As Sam said: "I don't think children should be reduced to AI summaries. I don't think even humans can really ever do that fully." That's not a limitation to overcome—it's a reality to design around.

Continue exploring this series on AI in UK children's social care, what care-experienced young people actually want: AI as a facilitator of information in care—encouraging better support conversations | AI within children's social care systemic constraints—from 'case' to care

Composed with the help of AI, drawing on my dissertation in Sociology of Childhood and Children's Rights (UCL, 2025).