This is part of a series exploring care-experienced young people's perspectives on AI in UK children's social care. Read the introduction and overview here.

This article examines the structural forces shaping children's experiences in care—and what care-experienced young people fear when AI is positioned as the solution to a system that already makes them feel like burdens. In this article, you'll explore:

- Why systemic context matters — a care-experienced young person's testimony reveals what's at stake when AI meets an already under-resourced system

- How children's social care's systemic challenges shape care experiences — how underfunding, high caseloads, and worker turnover create the conditions AI is now expected to fix

- AI within systemic constraints — how AI is being positioned as an efficiency solution, and why that framing concerns care-experienced young people

- What care-experienced young people and social workers told me — four key findings from my research on AI's risks and possibilities within an unequal system

- What this means for AI product development and social care practitioners — practical steps to ensure AI redistributes power rather than reinforcing it

Why systemic context matters: Lamar's testimony

In February 2025, Lamar, a 19-year-old care-experienced young person, testified before the House of Commons Education Committee. Their account contributed to a report recommending that young people be consulted to improve services. Their testimony reveals why that recommendation—like so many before it—is urgently needed.

Lamar described a childhood defined by instability: frequent placement changes, learning to navigate four different areas in six months, making unintentional choices with lifelong consequences. Speaking to Parliament, they reflected: "For foster carers and for social workers too, we have all been let down by the system." And despite doing everything asked of them—A-levels, a part-time job, navigating benefits and housing at 18—they described "a pit in my stomach that is absolutely terrified for the future."

I open this article with Lamar's testimony because it reveals why these problems endure despite repeated policy interventions. The systemic challenges Lamar describes—inadequate information, fractured relationships, administrative chaos—persist across decades of reviews, from the Munro Review of Child Protection (2011) to the MacAlister Review (2022). They raise fundamental questions: what structural forces shape care experiences? And as AI is positioned as the solution to social work deficiencies, might it risk exacerbating the problems it aims to solve?

How children's social care's systemic challenges shape care experiences

The 2025 report of the House of Commons Education Committee into the state of Children's Social Care in England reiterated systemic challenges documented over the past 20 years with minimal signs of improvement: increased numbers of children and families needing support; reduced budgets despite rising costs; social worker turnover and lack of training preventing sustained relationships with service users; and detrimental impacts on children's experiences and futures.

Against these pressures, the UK government has positioned technology adoption, particularly AI, as essential for addressing service demand while reducing costs. Organisations pursue automation driven by the promises of efficiency, an ideal that envisions technological systems that operate without error and deliver predictable outcomes. However, critics warn that AI may reproduce bias and inequality, and amplify existing challenges for service users.

The MacAlister Review (2022) highlights a system that prioritises bureaucratic and formal structures over authentic personal relationships and community networks. These processes create significant barriers to children's participation, with time constraints severely impacting trust and relationship-building. When social workers focus solely on collecting evidence for decision-making rather than building relationships, this creates significant tension with the values of relationship-based practice.

Research by O'Keefe and colleagues shows that 56% of practitioners agree case recording takes time away from direct work with children. High caseloads, low resourcing, and administrative demands significantly impact professionals' ability to build meaningful relationships with children. Research has documented that current technology diverts social workers' attention from meeting children's needs to satisfying forms, processes, and inefficient systems—practitioners experience software that is difficult to use and time-consuming, requiring repetitive data entry across systems and preventing timely access to information from other professionals.

AI within systemic constraints

Administrative work—including form-filling and record-keeping—consumes 60-80% of social workers' time and is often conducted outside official working hours. Technology has demonstrated the ability to produce significant time savings: a the MacAlister Review found a 48% reduction, while AI tools like Magic Notes demonstrate potential to save 8 to 10 hours a week.

Research identifies opportunities in digital communication tools: enhanced accessibility, more direct contact between children and social workers, and increased flexibility through asynchronous communication giving young people choice over when to respond. However, significant challenges exist: not all service users have access to technology; social workers struggle to assess risk through digital channels; and tools risk shifting from relationship-building to surveillance. Practitioners express concern that efficiency gains can come at the cost of the relational work that makes care meaningful.

This tension—between efficiency promise and relational reality—is precisely what care-experienced young people in my research had most to say about.

What care-experienced young people and social workers told me

Values-first: caution around how time-saving will actually be used

The Safeguarding SW initially worried AI felt like "an intrusion" to relationship-based practice: "I put my values around relationship building... using MI technique to build that relationship so that the person felt listened to and understood, and that is the core value of how I feel as a safeguarding social worker."

The Independent SW was clear that time-saving wouldn't be as significant as hoped: "So there will be some time saving but I don't think it's going to be as great as people perhaps think it might be... I couldn't with a clear conscience let a machine do the judgments in isolation... Any time saved should be spent doing direct work with the client always... but I also think we need to be careful around assumptions around time saving, because you are still going to have to go back and read all of that and make sure you know that it matches with your analysis."

Time-saving alone addresses symptoms, not underlying problems. What matters for care-experienced participants is how that time gets used. Despite their caution, all participants saw potential in AI enabling more informed decisions and a more comprehensive understanding.

Care-experienced participants expected AI to be available to them—not just social workers

When it came to accessing transcripts, recordings, assessments, browsing records, and understanding regulations and policies affecting their rights, care-experienced individuals wanted the same AI tools. Though beneficial for social workers, young people's own access was seen as having a groundbreaking, empowering effect—a tool not just to make existing systems more efficient, but a potential means to fundamentally reshape the quality of care and redistribute power.

Jessie imagined: "So this is the kind of tool that would also be accessible to younger people as well, if they want to see records about themselves... Because it's going to help the social worker to do their job. But it's going to help the child to live more comfortably, if they have access to the information about what's going on with them."

Sam saw incredible potential in AI creating equity in navigating policies and understanding rights: "when I was in care there was like a charter for Care leavers... it was like 30 plus pages long... if I had a question that was like, 'I'm going to college. Can I get money for the bus?' I would want AI to be able to say, 'yes, [the council] provides up to 20 pounds a week for bus.'" They described manually searching the Children's Act for hours to find one sentence to challenge their local authority.

The Cautiously Curious agreed, acknowledging it would help with "leveling, how to understand your rights, like, it's really complicated if you just go and read through your rights, but if you can go in and ask, like, a certain topic... What rights do I have that would be really, really useful."

How should time saved actually be used?

If AI does save time, participants had clear visions for how it should be spent—none of which involved simply processing more cases. Five priorities emerged:

- Check accuracy and original information — The Sceptically Suspicious emphasised: "Making sure that everything is accurate... if they're gonna use a tool, have you got to check the tool." Social workers still need to read original information and can't rely solely on AI summaries. The Independent SW was clear: "I want to hear it from them."

- Dive into detail and switch to action — Jessie saw opportunities to move beyond information collection: "If an AI is already capturing all the things they want to... which uni they want to go to... you could immediately just go straight into. Oh, you want to go to Uni. Okay, let me show you how to set up a Ucas account... let me pull up all these tools and things that we have to help you."

- Think long-term and holistically — Sam explained: "I think we spend so much time focused on like firefighting and doing the bare minimum to keep a young person alive. They don't really get to thrive." Time saved would enable thinking "more holistically around the child" and having "conversations where they could ask the child about their wants, their needs... raising aspirations."

- Spend quality time building real relationships — The Sceptically Suspicious described what would make young people feel valued: "going out for food, playing games... spending quality time... it is always about creating relationships with people", emphasising "social workers should go and actually see the person... Need to create a connection with the young person."

- Collaborate to improve services — The Sceptically Suspicious wanted time used to "work together with other social workers to make changes + improvements".

The fear: AI reinforces feeling like a case to be processed

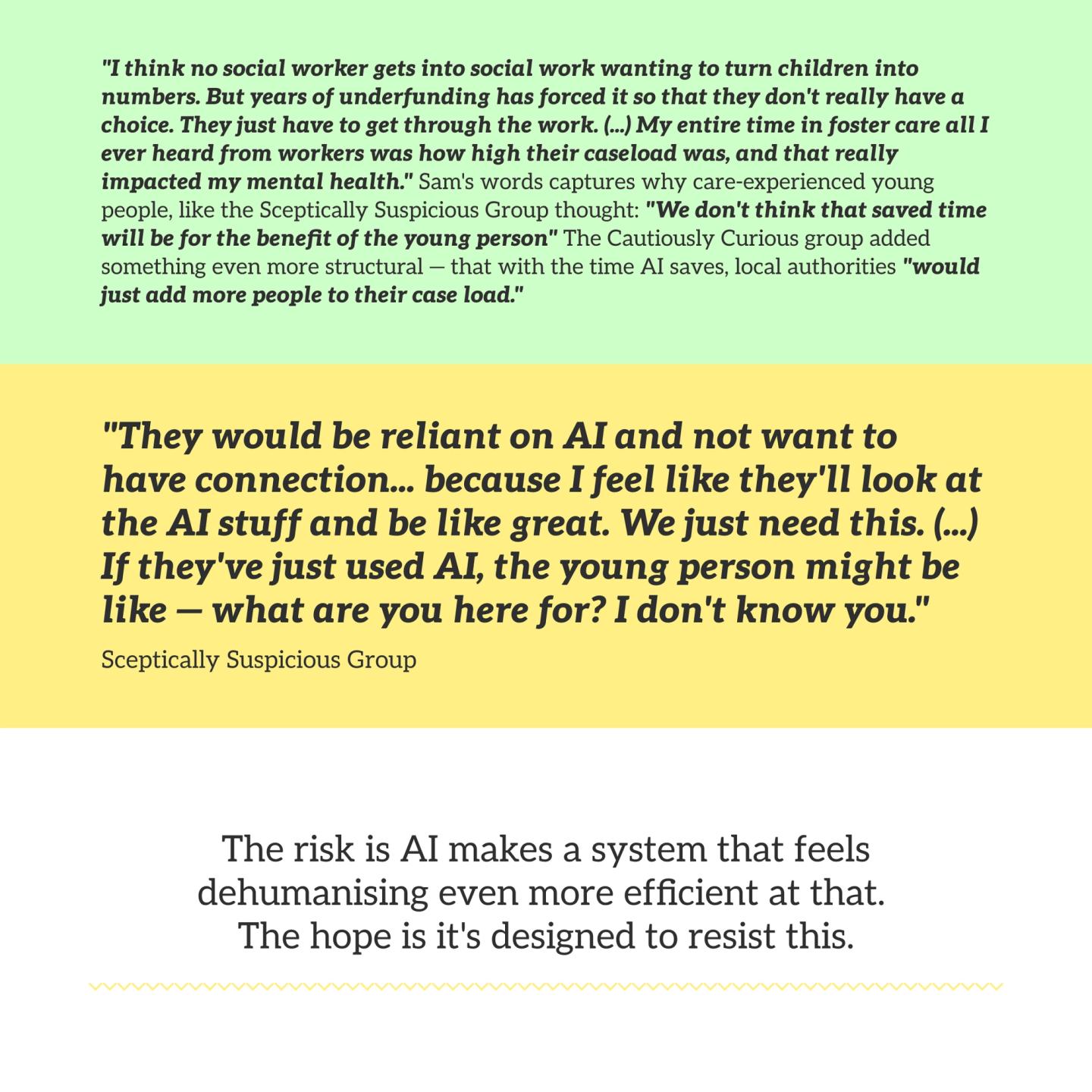

Sam described something that shaped everything else in their interview: "my entire time in foster care all I ever heard from workers was how high their caseload was, and that really impacted my mental health... they never had time for meetings, and everything was always very rushed." This constant message about high caseloads didn't just explain rushed meetings—it made young people feel like burdens.

As Jessie named it directly: "I'm not actually being cared about... they're just kind of processing me." The Sceptically Suspicious feared AI would intensify this: "AI taking away human connection... Can take away from the real meaning of what's happening." They worried about risk scoring: "scoring them is going to make them feel horrible about themselves."

And they didn't believe saved time would actually reach young people: "They would be reliant on AI + not want to have connection... because I feel like they'll look at the AI stuff and be like great. We just need this." The Cautiously Curious worried local authorities "would just add more people to their case load"—that AI becomes justification for not hiring more social workers.

What this means

Focusing solely on time saved — though easier to measure — misses what matters most for care-experienced participants: the quality of social workers' practice and young people's lived experiences. Sam's words cut to it clearly: "my entire time in foster care all I ever heard from workers was how high their caseload was, and that really impacted my mental health... they never had time for meetings, and everything was always very rushed." The problem isn't just efficiency. It's that the pressures of an under-resourced system communicate to children that they are a burden, not a priority.

Crucially, participants fundamentally reframed the question from 'how can AI help social workers?' to 'how can AI empower us to understand our rights and participate meaningfully in our own lives?' Young people didn't just fear AI would make them feel 'like a case' — they explained they already feel this way, and AI risks intensifying it unless intentionally designed otherwise.

The Sceptically Suspicious believed social workers wouldn't actually spend saved time with young people — but would instead take on more cases. This scepticism isn't unfounded pessimism. It reflects young people's lived experience of being deprioritised despite rhetoric about their importance. It challenges the assumption in current AI adoption discourse that benefits will automatically trickle down to young people if social workers save time.

Instead, care-experienced participants in my research call for AI intentionally designed as a shared resource — what Jessie called the difference between "it's your job" and "it's my life." Because resisting AI's tendency to deepen dehumanisation involves intentional design that prioritises the quality of caring and being in care over time savings. That means redistributing power: giving young people access to the same tools as social workers, designing AI that sees them as whole people with futures beyond their case files, and measuring success not by how many cases are processed — but by whether young people feel genuinely cared for.

What this means for AI product development and care practitioners

Without addressing underlying systemic constraints—underfunding, high caseloads, crisis response culture—AI risks making social workers more efficient at processing more children rather than supporting them. Measuring success through efficiency metrics alone fundamentally misses the point.

For designers and developers:

- Redefine success from efficiency to quality of care — Design AI that measures what actually matters: Do young people feel heard? Understand their rights? See their wishes reflected in plans? Have stable, meaningful relationships? Build in metrics that track relationship continuity, frequency of direct contact time, young people's understanding of their care plans, and satisfaction with participation in decisions. If your tool only measures time saved, that’s a very incomplete picture.

- Build features that resist dehumanisation — Surface children's interests, aspirations, and strengths—not just risks and deficits. Flag when children's wishes haven't been recorded or conflict with professional assessments. Identify when young people repeatedly ask for support that isn't being provided. Make visible the child as a whole person, not just a case to be processed. The Sceptically Suspicious's fear—that scoring children "is going to make them feel horrible about themselves"—is a design problem as much as a practice problem.

- Create tools for young people's direct access — Build AI interfaces that translate complex policies into accessible guidance. Enable young people to search their own records, understand their history, and verify transcripts and assessments. Design features that connect young people's wishes to available resources and statutory entitlements. This is the most consistently urgent call from every care-experienced participant in this research—and the most consistently absent from AI adoption discussions in children's social care. It isn't an add-on. It's what makes the difference between "it's your job" and "it's my life."

For children's social care practitioners:

- Use saved time for relationship-building, not case processing — If AI handles administrative tasks, redirect that time to unhurried conversations and genuine presence. Think long-term and holistically—not just firefighting crises. The Sceptically Suspicious group named exactly what quality time looks like: "going out for food, playing games, spending quality time... it is always about creating relationships with people."

- Don't let AI become a substitute for seeing the child — The Sceptically Suspicious's fear was specific: that social workers would look at AI outputs and think "great, we just need this." Resist that pull. Read original information. Hear children's stories directly from them. Recognise that no AI summary captures why a child said something, what their face looked like when they said it, or what they were holding back. Direct engagement with the child is irreplaceable—not because AI makes mistakes, but because relationship is the work.

- Advocate for caseload reductions, not just efficiency gains — Push back against expectations that AI should enable processing more children. Insist that time savings go to reducing caseloads so you can spend more time with each child. Collaborate with other social workers to improve practice, not just work faster. This was the advice from young people in my research.

- Share AI tools with young people — Give young people access to AI tools that help them understand their rights, review their records, and verify transcripts. Treating young people as partners in using AI tools is an act of participation—and it's something you can encourage.

For local authorities and policymakers:

- Invest time-savings in caseload reduction, not capacity increases — Use efficiency gains to reduce caseload numbers and protect time for direct work, so social workers can spend more time with each child, think long-term, and build relationships. Without this commitment, every hour AI saves becomes an hour filled with another child's case—and the Cautiously Curious were right to predict it.

- Measure quality of care, not just efficiency — Track relationship continuity: how long young people have the same social worker. Monitor frequency and quality of direct contact. Measure young people's understanding of their care plans and their satisfaction with participation in decisions. Evaluate whether support is genuinely tailored to individual needs. These are the metrics that reveal whether AI is working for young people—or just working faster.

- Make young people's direct AI access a structural requirement, not a future aspiration — Provide young people with access to the same AI tools social workers use to understand policies, rights, and options. Create systems where children can review their records, flag inaccuracies, and add their perspectives in real-time. Sam's experience—searching the Children's Act for hours to find one sentence to challenge a local authority decision—shows an inherent barrier to participation. AI can dramatically change access to that information.

- Ensure AI integration accompanies systemic change — AI cannot substitute for investment in workforce capacity, reduced caseloads, and protected time for direct work. Without that structural commitment, AI risks making a stretched system more efficient at the wrong things.

Continue exploring this series on AI in UK children's social care, what care-experienced young people actually want: AI as a guiding storyteller in care—uncovering stories and understanding | AI as a facilitator of information in care—encouraging better support conversations

Composed with the help of AI, drawing on my dissertation in Sociology of Childhood and Children's Rights (UCL, 2025).