This is part of a series exploring care-experienced young people's perspectives on AI in UK children's social care.

From an idea sparked by a Netflix series in 2021, to running workshops in 2025 with care-experienced young people exploring AI's role in UK children's social care. One quote from my research captures everything:

"There's a difference between oh, it's your job, and oh, it's my life!" — Jessie

Why this research matters

Across England, 28 councils are testing AI for case note writing and automatic transcription in children's social care. Social workers are using tools like Magic Notes to transcribe meetings, summarise conversations, and help write assessments. AI systems are being explored to predict risk, connect information across organisations, and make children's care processes more 'efficient.'

For example, in Swindon, a Magic Notes pilot reported a 63% reduction in admin time. North Yorkshire Council is developing prototypes that integrate data from multiple sources—creating comprehensive case overviews with AI-powered search and summaries to identify connections and patterns across a child's records. Lincolnshire Police trialled CESIUM, an AI system designed to transform child safeguarding by analysing data to support early intervention decisions.

Meanwhile, predictive risk assessment tools—like the Allegheny Family Screening Tool in the US—use algorithmic decision-making to score referrals, helping overwhelmed caseworkers prioritise cases. UK trials of similar systems have had mixed results. Hackney Council's Early Help Profiling System was discontinued after unsuccessful outcomes and public concerns over transparency and families' lack of knowledge about its use.

Yet there are voices notably absent from all of this development: the young people whose lives these technologies will shape.

When I watched The Trials of Gabriel Fernandez on Netflix—about an 8-year-old boy who died despite multiple reports to US child protection services—I saw AI positioned as the solution. In the UK, the government's AI Opportunities Action Plan positions AI adoption as essential for addressing service demand while reducing costs across public services. Against pressures of increased numbers of children needing support, reduced budgets, and social worker turnover, technology—particularly AI—is being framed as the answer to systemic challenges that have persisted for decades.

I can't help but wonder: How are these technologies being designed? Based on whose voices and needs? Were care-experienced young people involved? Did anyone ask them about the real life impact on them?

The exclusion of young people's voices in emerging technology is not new. UNICEF's consultation with adolescents worldwide about AI revealed that most "stated strongly that decision-making about AI is adultcentric" and wanted to be heard and involved in AI development. So my research centred on exactly that: hearing young people's perspectives on AI use in the children's social care services designed to support them.

What young people told me

Their perspectives revealed three interconnected findings that challenge how we're currently approaching AI in children's social care:

💫 Young people want access to the same AI tools as social workers

To check AI transcripts, summaries, and case records. To explore policy, understand assessments, and navigate their identity, stories and rights—information not always made accessible to them.

As one participant explained: for social workers, AI is part of their work; a case is one of many. For children and young people in care, it's their whole life at stake. Young people should be able to access the same tools to understand what's being said about them, verify accuracy, and understand their rights in real-time rather than discovering years later what was written about them.

Read the full article: AI as a guiding storyteller in care—uncovering stories and understanding

🌱 Young people don't want to be reduced to AI summaries

They're acutely aware of how they've been written about—filtered through professional interpretation, reduced to negative behaviours, judged before social workers even meet them. They see power in AI helping diversify perspectives with honest curiosity, not creating reductive narratives.

Even when transcribing or writing assessments, AI makes decisions about what to include, interpret, and emphasise. In contexts this sensitive, who gets heard, how voices are portrayed, and what gets interpreted matters profoundly. Young people fear being "reduced to AI summaries" the same way they've already been reduced to incomplete, judgmental case notes.

Read the full article: AI as a guiding storyteller in care—uncovering stories and understanding

🤖 Young people want AI to augment social worker expertise, not replace it

They imagined more productive conversations where support information is readily available and both social workers and young people access an even playing field of information with AI. This means designing AI-enabled workflows that reward critical thinking, not inadvertently incentivise complacency.

AI can handle admin, but not the crucial "so what?" question—what does this mean for the child, what support do they need? That requires human expertise. Young people emphasised that AI should help social workers think long-term, holistically, relationally—not just process cases faster.

Read the full article: AI as a facilitator of information in care—encouraging better support conversations

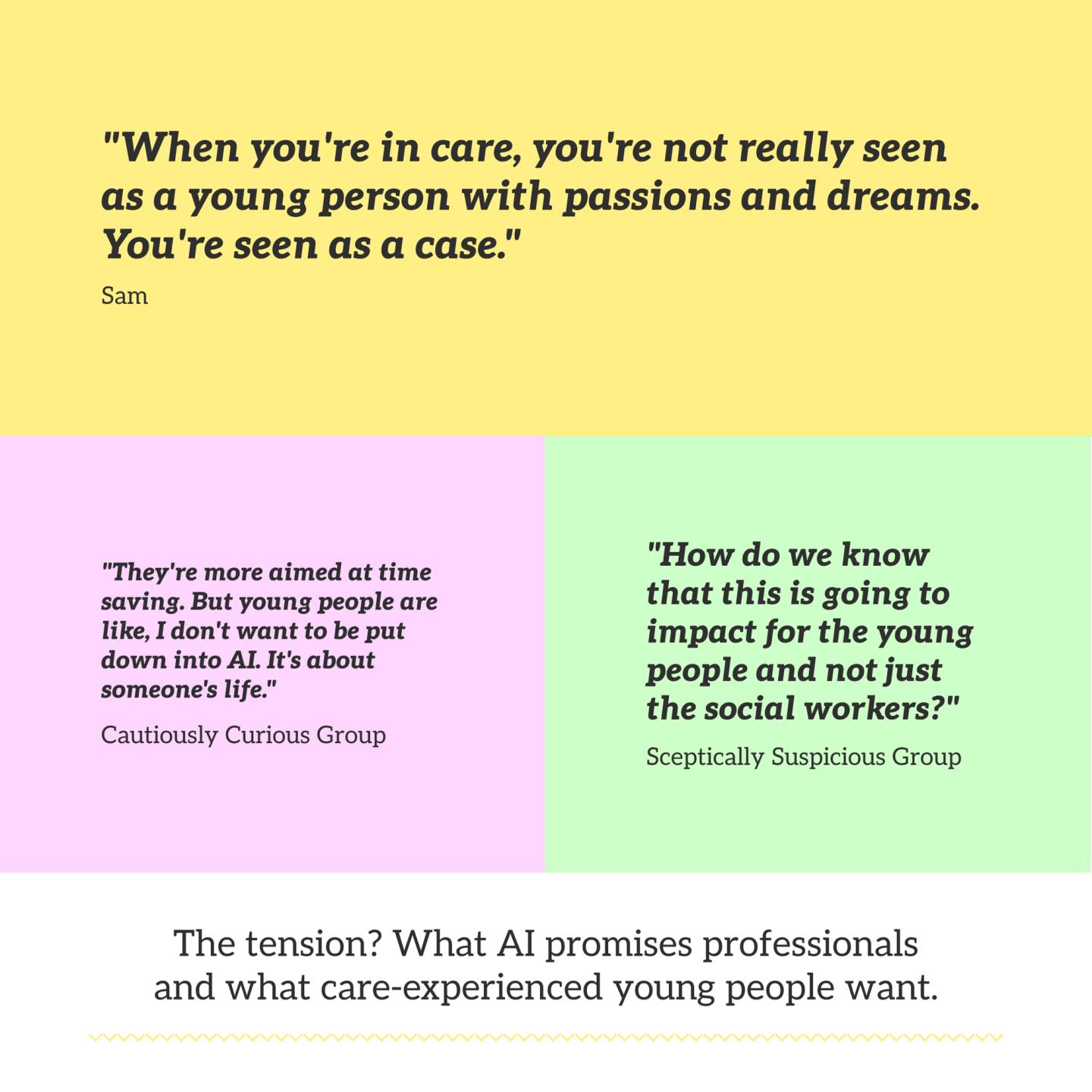

❤️ AI must be oriented to quality of life, not just time-saving

In resource-constrained systems, young people in care hear constantly about high caseloads. It makes them feel like burdens. They're sceptical saved time will benefit them rather than just enabling more case processing.

The tension: care-experienced young people support AI's promise to save time, but those savings must go to relationship-building and long-term thinking. Without addressing underlying systemic constraints—underfunding, high caseloads, crisis response culture—AI risks making social workers more efficient at processing children rather than supporting them.

Read the full article: AI within children's social care systemic constraints—from 'case' to care

What this means

The question isn't whether AI belongs in children's social care or how much time it saves. It's whether adults are willing to share power so young people feel genuinely heard, understood, and have genuine choices—with practitioners who know them as whole people with futures beyond their care cases.

Success should be measured not solely through time saved or costs reduced, but through whether young people feel heard, understand what's happening, have genuine choices, and experience stable, meaningful relationships with practitioners who know them as whole people.

Care-experienced participants insisted that AI's power must be genuinely shared. Young people should have access to the same tools as social workers to understand their rights and records, review transcripts and assessments, and navigate policies. This directly challenges protectionist approaches and represents a fundamental call for redistributing power.

Explore the research

My findings raise critical questions about how we design, deploy, and experience AI in children's social care. I've written a detailed series of articles exploring what care-experienced young people envisioned and offering a framework for designing beyond the tool itself—examining AI's outputs, the workflows it creates, and the ways it can resist or reinforce systemic pressures. These articles centre care-experienced young people's perspectives and build on conversations with social workers and professionals doing exceptional work in this space.

Part 1: AI as a guiding storyteller in care—uncovering stories and understanding

Part 2: AI as a facilitator of information in care—encouraging better support conversations

Part 3: AI within children's social care systemic constraints—from 'case' to care

Moving forward: Cared—AI-powered care records assistant

Care-experienced young people's lives are documented extensively by social services, yet these records remain largely inaccessible and incomprehensible to them—filled with fragmented narratives, professional jargon, filtered voices, negative framing, and missing context about decisions that shaped their lives.

In February 2025, Lamar, a 19-year-old care-experienced young person testified to the House of Commons Education Committee about the devastating impact of being uninformed whilst in care. At age 12, when reality hit that they weren't going back to their parents, Lamar "spiralled into devastating mental health problems" and now "cannot remember most of [their] childhood as a consequence of this".

Despite extensive care records documenting children's time in care, these remain disconnected from children's lived experience.

So I'm experimenting with developing Limn: a privacy-first AI tool that helps young people see themselves holistically across their care records, not just through the crisis-focused lens of care documentation. Running entirely on their device, the AI aims to surface positive aspects buried in records, explain jargon, identify gaps, and connect young people to their rights through accessible explanations of relevant legislation.

This is early work—I'm building a prototype inspired by what young people told me in my research. There's much more to learn, iterate, and co-design with care-experienced young people. I'll share progress, learnings, and next steps as it develops.

More to come… ❤️🤖🌱💫

Composed with the help of AI, drawing on my dissertation in Sociology of Childhood and Children's Rights (UCL, 2025).